|

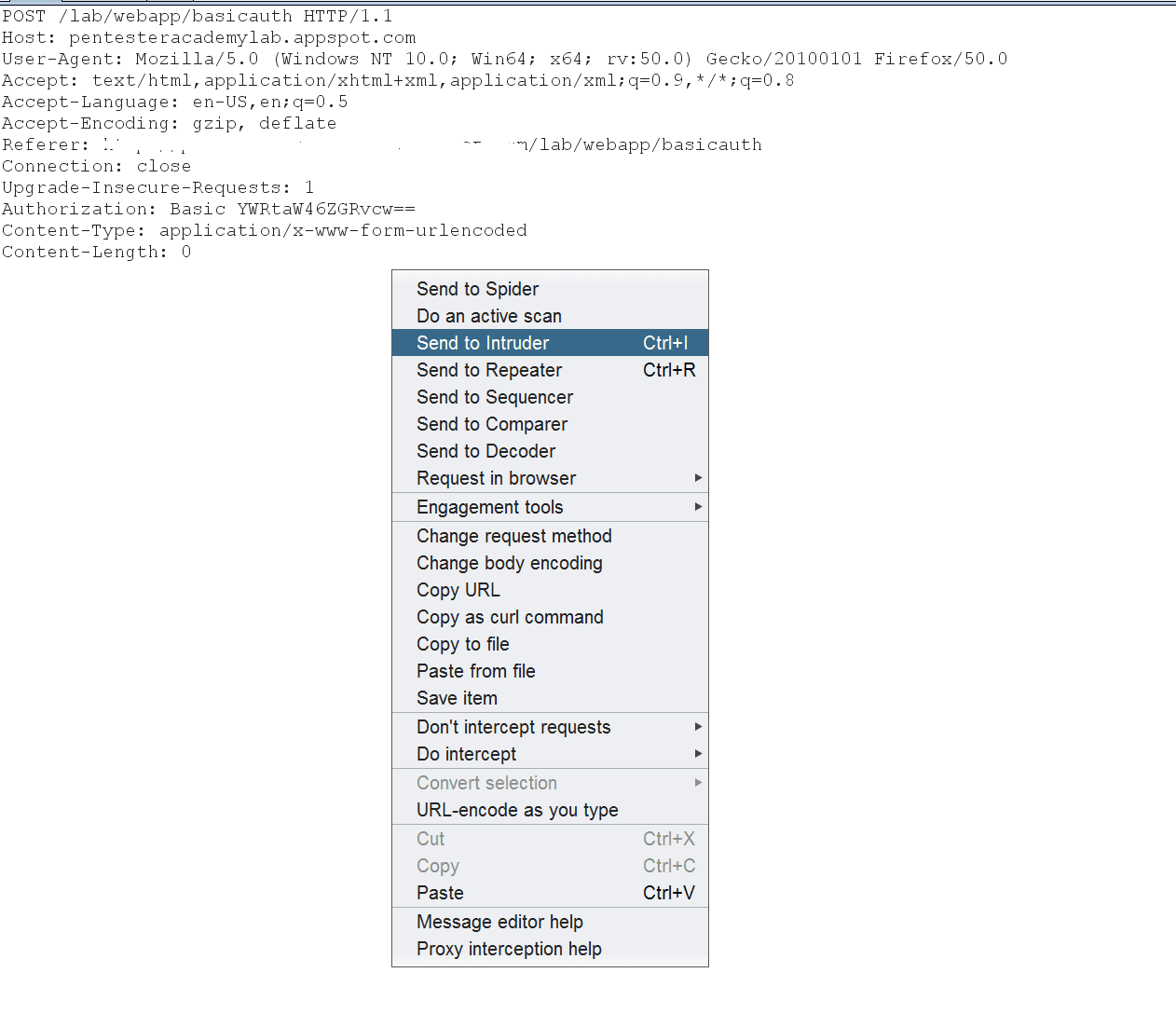

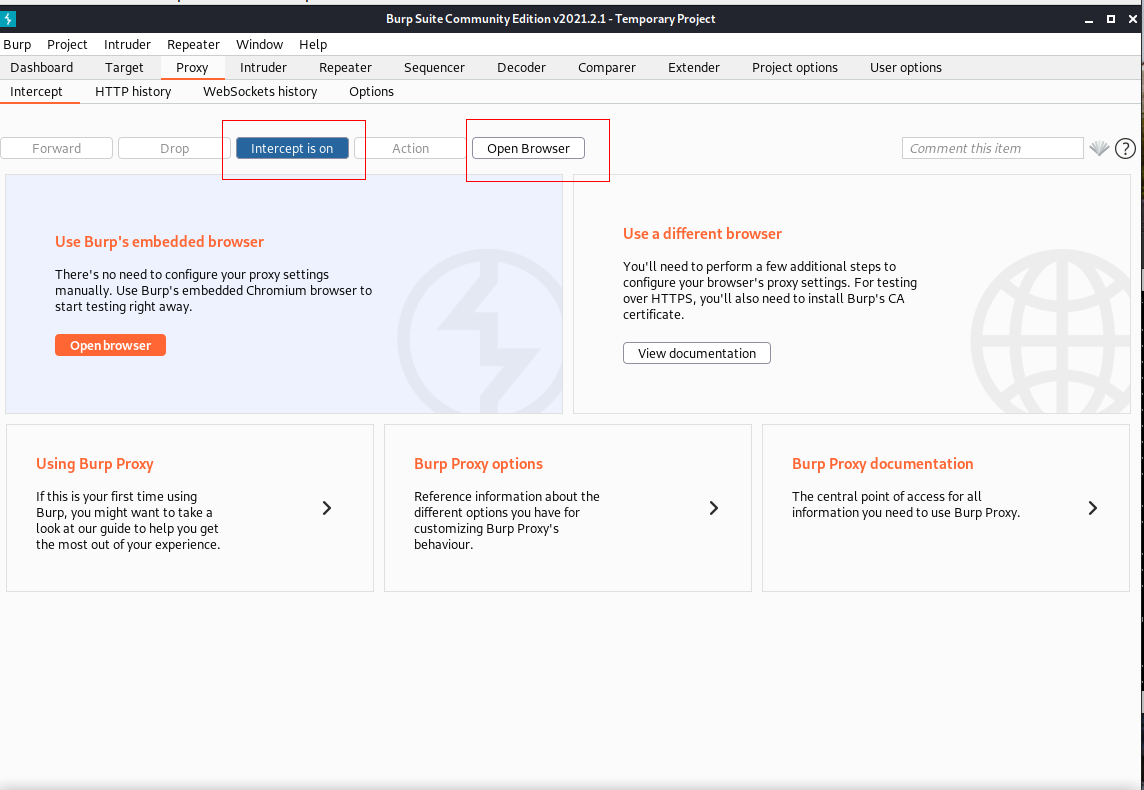

This has the added benefit of recording the attack responses in one window for easy inspection. The better alternative is to leverage Burp Suite to do this easily with a few clicks. You can even spend some time whipping up a Python script that can automate this task. You could try and guess each day manually, but that's not ideal. The filenames appear to be the MD5 digest of the date the file was generated, but the application will not generate a PDF file every day. You can get creative with custom payloads and payload processing.Ĭonsider a scenario where the application generates PDF files with sensitive information and stores them in an unprotected directory called /pdf/. This is a convenient way to implement this protocol, but it has the implication that this file must be readable by anonymous users, including yourself.Ī sample robots.txt file will look something like this:Ĭredential brute-forcing is just one of the many uses for Intruder. The robots.txt file essentially provides instructions for legitimate crawler bots on what they're allowed to index and what they should ignore. The robots.txt file is generally interesting, as it can provide "hidden" directories or files, and can be a good starting point when brute-forcing for directories or files. While brute-forcing for files, you can take this into account when attaching the extension to the payload. In contrast, while there are Active Server Pages (ASP) processors on Linux systems, PHP or Node.js are much more common these days.

While PHP is still available on Windows (via XAMPP), it is not as commonly encountered in production environments. For example, an IIS web server is more likely to have an application developed in ASP.NET as opposed to PHP. You can make assumptions about the application based on the very simple information shown in the preceding list.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed